Information Theory SG Seminar by Dr. Qibin Zhao on July 28, 2021

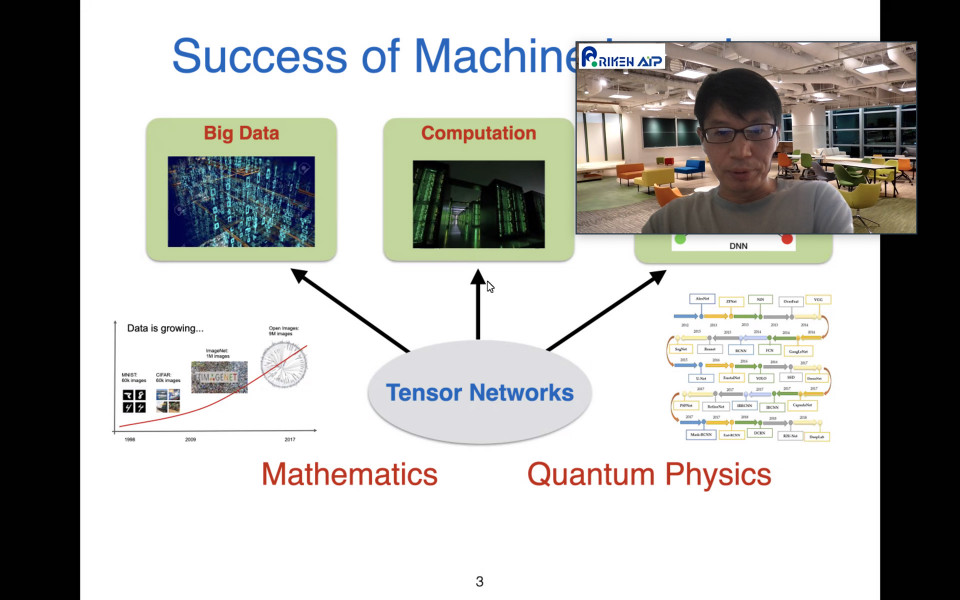

In the Information Theory SG seminar on July 28th, Dr. Qibin Zhao gave us an exciting talk about the basics and applications of tensor networks (TNs) in machine learning. His talk was divided into three parts, (i) tensor methods for data representation, (ii) TNs in deep learning modeling, and (iii) frontiers and future trends. In the first part, he started with a graphical introduction of tensors and dimension reduction methods. He then presented the important question of how the imperfect data represented by tensors can be completed using the low-rank approximation, and introduced several possible approaches with impressive examples. Here he explained the TNs and decomposition methods in detail using diagrammatic notations, which can concisely express the tensor operations such as the contraction. In the second part, he talked about the useful application of TNs to model compression in machine learning. He illustrated the ways to represent the neural network model by tensors and to learn the weights through the contraction. Interestingly, the density matrix renormalization group (DMRG), which was originally proposed in the quantum physics field, may be used as a learning algorithm. In the last part, he showed an overview of recent topics on TNs and machine learning such as TNs for probabilistic modeling and supervised learning with projected entangled pair states (PEPS). Intriguingly, the topological structure of TNs can be optimized for a given image, and the learned topology significantly depends on the input data and is more complex than conventional simple structures such as lines, trees, or cycles. The clearly structured talk took us from the basics to the cutting-edge subjects, and there were many questions and discussions during the talk. We are deeply grateful to Dr. Qibin Zhao for his excellent talk on the fast-growing interdisciplinary field.

Reported by Kyosuke Adachi